Neural network based AI model for lung health assessment

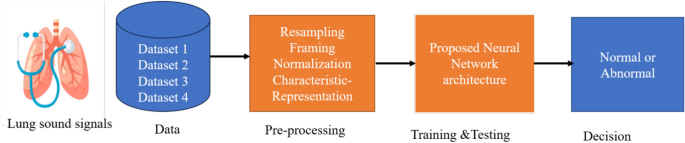

The methodology proposed in this study is illustrated in Fig. 1. The steps followed can be broadly divided into data acquisition, pre-processing, training and testing, and final decision. These steps are discussed in detail in the following sections.

Block diagram of the proposed methodology.

Dataset

In this work we have employed two popularly available datasets, one is famously known as ICBHI 2017 challenge dataset and the other one is KAUH lung sound dataset. Two more datasets are prepared as combinations of these two public datasets. The number of signals used in first three datasets is given in Table 1, which describes the number of signals present in each class.

Dataset 1 The ICBHI 2017 challenge dataset is an openly available dataset of lung sound signals that has been created for the purpose of research and evaluation4. The dataset comprises 920 lung sound recordings obtained from 126 individuals with lung diseases, including pneumonia and asthma. The recordings were collected utilizing digital stethoscopes and saved in the WAV format. They have a bit depth of 16 bits and a sampling rate of 4,000 Hz. Each recording can last up to 30 seconds and is labeled with the corresponding diagnosis of the patient. The dataset is divided into two subsets: a training set that contains 689 recordings, and a test set that has 231 recordings. Along with the audio recordings, the dataset contains patient information such as gender, age, and medical history, as well as annotations of lung sound events such as wheezes, crackles, and normal sounds.

Dataset 2 It includes 337 recordings of lung sounds obtained from 112 subjects, consisting of 35 healthy individuals and 77 people with pulmonary diseases42. The recordings were collected in a silent environment at King Abdullah University Hospital (KAUH). The lung sounds were acquired using an electronic stethoscope and stored in the WAV file format. The dataset also provides demographic information about the subjects such as their age and gender. Moreover, each recording is annotated with labels indicating the presence of type of disease. Each recording lasts from 5 to 30 seconds. The primary goal of this dataset is to facilitate research and development of automatic lung sound analysis techniques for detecting and diagnosing pulmonary diseases.

Dataset 3 Dataset 1 and Dataset 2 are combined to create the third dataset.It encompasses a total of 1,257 signals, covering conditions such as normal, COPD, pneumonia, BRON, heart failure, URTI, LRTI, lung fibrosis, and pleural effusion.

Dataset 4 This dataset is created by dividing signals from dataset 1 and dataset 2 into 3 classes, i.e., normal, chronic and non-chronic. This dataset includes 140 signals classified as normal, 826 chronic signals, and 291 non-chronic signals.

Dataset 5 The dataset presented in43 comprises 12-channel lung sound recordings from each participant and includes five different severity classes of COPD. In this study, it is utilized as a test dataset to assess cross-dataset performance.

Pre-processing

For pre-processing the signals, we have performed resampling, framing, and normalization. It is required that all signals have a similar sampling rate to extract distinguishable features. Therefore, all the signals are adjusted/re-sampled at a sampling frequency of 4000 Hz. The re-sampled signals then undergo segmentation to make smaller frames having a duration of 3 seconds. It is done to reduce the computational complexity of the analysis steps. Processing large datasets of lung sound signals can be challenging and computationally demanding due to the vast amount of information they contain. Breaking the signals into smaller frames enables more efficient handling and processing of the data. Additionally, breaking the signals into frames helps in accommodating variations in the duration of the different components present in the lung sound signals. These frames are then normalized. Normalizing is a pre-processing technique that aids in mitigating the influence of variations in magnitude and variance across various features or variables. When the input features have different scales or ranges, features with greater magnitudes or variances may exert a more significant impact on the model than features with lower magnitudes or variances. This may result in bias in the model, resulting in sub-optimal performance. Normalization also helps to avoid numerical instability. In this work, we have used min-max normalization, which is a technique used to adjust the values of a signal to a specified range, often ranging from 0 to 1. Mathematically it can be expressed as

$$\begin{aligned} x_{norm} = \frac{x – x_{\min }}{x_{\max } – x_{\min }} \end{aligned}$$

(1)

where x is the original signal, \(x_{min}\) is the minimum value and \(x_{max}\) denotes the maximum value. This normalization process ensures that the signal’s values are re-scaled to fit within the desired range while preserving their relative proportions. Subsequently, the normalized signal is passed on for further processing and feature extraction.

Signal representation

The normalized frames are analyzed using a bank of zero-phase filters. To enhance signal quality and remove noise or unwanted components from a signal, zero-phase filters are commonly used44. These filters do not introduce any delay in the signal, unlike traditional filters that cause phase distortion. They preserve the phase relationship between different frequency components of a signal, which is especially useful for audio signal processing where accurate signal analysis is dependent on phase information. Zero-phase filters are often applied as a pre-processing step in machine learning to improve signal consistency and quality before feeding them to a neural network for classification or training. The frames are partitioned into distinct frequency bands by applying zero-phase filters denoted by

$$\phi _{j} (m) = \left\{ {\begin{array}{*{20}l} {1,} \hfill & {{\text{for}}(M_{{j – 1}} + 1) \le m \le M_{j} } \hfill \\ {} \hfill & {\& \,(N – M_{j} ) \le m \le (N – M_{{j – 1}} – 1),} \hfill \\ {0,} \hfill & {{\text{otherwise,}}} \hfill \\ \end{array} } \right.$$

(2)

where \(j \in 1, 2, 3, \cdots , L + 1\). These filters are identified by cutoffs \(M_j\), where \(M_0 = 0, M_1 = 0.5N/fs\), and \(M_{L+1} = N/2\), with N and \(f_s\) representing the length of the frame and the sampling frequency, respectively. The filtered components are obtained as

$$\begin{aligned} \begin{aligned} y_j[n]&= \frac{1}{N} \sum _{m=0}^{N-1} F(m)\phi _{j+1}(m) \exp (j2\pi mn/N) \\&\quad \text {for} \quad n=0,1,2,\dots ,N-1, \end{aligned} \end{aligned}$$

(3)

with \(j \in 1,2,3, \dots ,L\), where F(m) represents the discrete Fourier transform (DFT) of frame f[n], i.e.,

$$\begin{aligned} F(m) & = \sum\limits_{{n = 0}}^{{N – 1}} f [n]\exp ( – j2\pi mn/N) \\ & {\text{for}}\quad m = 0,1,2, \ldots ,N – 1. \\ \end{aligned}$$

(4)

The first filter \(\phi _1(m)\) is deployed to remove baseline wander noise45 that may occur due to slight patient movements during signal recording. After baseline wander removal, L components are extracted according to equation (3). This filtering approach guarantees that the extracted elements \(y_j[n]\) are free from any undesired time delays. The cutoff frequencies for the filters can be chosen using various techniques, like uniform, dyadic, or user-defined. In this study, uniform cutoff frequencies are chosen, with values of 125, 250, 500, 750, 1000, 1250, 1500, and 1750 Hz. To eliminate heart sound noise, which falls within the 0.5 to 150 Hz range, the first zero-phase filter is set with a cutoff frequency of 125 Hz. This ensures the removal of heart sound noise while preserving the lower-frequency components of lung sounds, which span from 100 Hz to 2000 Hz. These values have been chosen since they give equal weightage to all frequencies, resulting in an optimal classification performance.

Each sub-band is represented in terms of its key characteristics such as \(L^p\) norm (p=0.25,0.5), kurtosis (Kurt), mean absolute deviation (MAD), entropy (Ent) and standard deviation (SD). These characteristics are defined as

$$\begin{aligned} Kurt_j= & \sum _{n=0}^{N-1}\left( \frac{y_j[n]-\mu _j}{\sigma _j}\right) ^4, \end{aligned}$$

(5)

$$\begin{aligned} L_j^p= & \left( \sum _{n=0}^{N-1}|y_j[n]|^p \right) ^{\frac{1}{p}}, \end{aligned}$$

(6)

$$\begin{aligned} MAD_j= & \frac{1}{N}\sum _{n=0}^{N-1}|y_j[n]-\mu _{j}|, \end{aligned}$$

(7)

$$\begin{aligned} Ent_j= & -\sum _{i}p_{ij}log_2(p_{ij}), \end{aligned}$$

(8)

$$\begin{aligned} SD_j= & \sigma _j = \sqrt{\frac{1}{N} \sum _{n=0}^{N-1}(y_j[n] – \mu _j)^2} \end{aligned}$$

(9)

where \(\mu _{j}\) and \(\sigma _j\) denote the mean and standard deviation for the signal component \(y_j[n]\), respectively, and \(p_{ij}\) denote the probabilities for i different sample values of \(y_j[n]\). We use a set of eight zero-phase filters to extract frequency components from lung sound signals, and 6 characteristics are computed from each frequency band. This characteristic representation captures important information about the lung sound signals, which aids in the accurate classification and diagnosis of respiratory conditions using the proposed network.

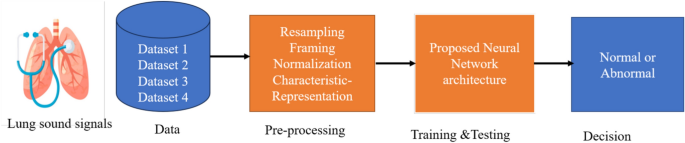

Proposed neural network model

Neural networks (NNs) have emerged as a promising machine learning algorithm for various classification problems. NNs are inspired by the human brain structure and are composed of interconnected neurons that process input data46. NNs have seen a rapid and significant rise in their applications within the field of medical research over the past few decades47. To apply NN for lung disease detection, the lung sound signals must undergo pre-processing and the signal should be represented in its core attributes. Then, the processed signals are used as input to train the NN on a dataset of lung sound signals labeled with corresponding disease categories. After being trained, the NN can predict the disease category of new, unlabeled lung sound signals. NNs have shown success in this field and are actively being researched and developed for improved accuracy and performance. In this work, we have proposed a simple NN architecture for the training and classification of lung diseases. The architecture of this model is implemented using the Keras application programming interface (API).

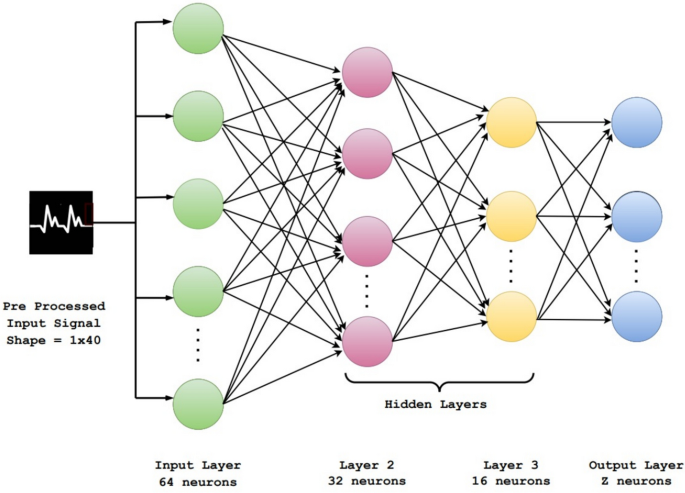

The proposed NN architecture is a stack of fully connected layers. Figure 2 depicts the NN architecture, wherein the input comprises the shape \(1\times 40\) wherein we are representing the lung sound signals in 8 sub-bands and each band in 5 basic characteristics. The first layer is a dense layer with 64 nodes/neurons. The second layer contains 32 nodes followed by another hidden layer having 16 nodes. In all these layers rectified linear unit (ReLU) is used as the activation function. It serves as a straightforward mathematical function that establishes non-linearity to the computations within the network. The definition of the ReLU activation function is given as follows:

$$\begin{aligned} ReLU(x) = max(0, x) \end{aligned}$$

(10)

If the input value x is equal to or greater than zero, ReLU returns the input value. However, if the input value is negative, ReLU outputs zero.

Finally, there is an output layer with z nodes and a sigmoid activation function is used for binary classification to determine if a signal is normal or abnormal. This is because the problem is a binary classification, and the sigmoid outputs a value between 0 and 1, which is interpreted as the probability of the positive class. For multi-class classification, we have used the softmax activation function with z number of nodes, where z is the number of classes (or diseases) in that dataset.

To mitigate the issue of over-fitting, a dropout rate of 0.25 is employed between hidden layers. Table 2 provides the parameters used for the NN architecture, such as learning rate, batch size, optimizer, number of iterations and the dropout rate. The number of epochs used in this network is 200. For optimization, Adam optimizer algorithm is used, along with categorical cross-entropy as a loss function. The model’s complexity is carefully balanced to avoid over-fitting, and hyper-parameter tuning is performed to achieve the desired results. Several parameter combinations were experimented and hyperparameter selection was finalized based on the highest validation accuracy while ensuring no overfitting, which was verified using learning curves and loss monitoring.

Proposed NN architecture with four layers.

Evaluation metrics

The effectiveness of the proposed methodology is assessed using a stratified 80-20 train-test split, and the metrics used are mathematically defined as48:

$$\begin{aligned} \text {Accuracy} (Acc)= & \frac{TP+TN}{TP+TN+FP+FN} \end{aligned}$$

(11)

$$\begin{aligned} \text {Sensitivity} (Sen)= & \frac{TP}{TP+FN} \end{aligned}$$

(12)

$$\begin{aligned} \text {Specificity} (Spe)= & \frac{TN}{TN+FP} \,, \end{aligned}$$

(13)

where TP is the rate of correctly diagnosing the disease called as true positive value, TN is the true negative rate denoting correct diagnosis of not having disease. FP and FN denote the false detection and miss rates, respectively.

link