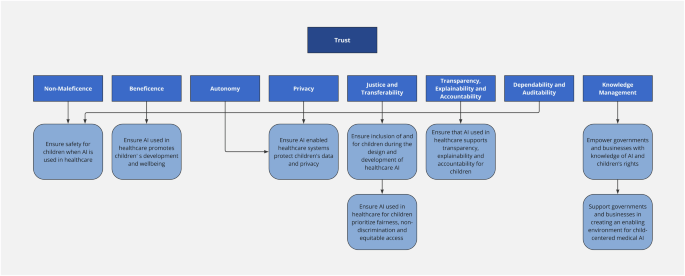

Ethical considerations in AI for child health and recommendations for child-centered medical AI

First described in 1979, Beauchamp and Childress’s landmark work17 on foundational principles of medical ethics is ever more important in considering the ethical debate surrounding AI-enabled applications and usage. Key principles then highlighted include—autonomy, beneficence, non-maleficence, and justice, which have been cornerstones of ethical discussions in healthcare. Jobin et al. identified other ethical concerns with regard to AI18, and these concerns include transparency, privacy, and trust. The American Medical Informatics Association (AMIA) has also defined additional AI principles that include dependability, auditability, knowledge management and accountability7. Unfortunately, some of these ethical principles may conflict with one another, such as justice and privacy, as illustrated below.

Non-maleficence

Non-maleficence implies the need for AI to be safe and not to cause harm18,19. References to non-maleficence in AI ethics occur more commonly than beneficence18, likely due to society’s concerns that AI may intentionally or unintentionally inflict harm. Prioritizing non-maleficence before beneficence when approaching AI systems by no means suggests that AI systems are fraught with risks or harm. Rather, it highlights the approach to ethical issues in the context of AI. Before an AI system is implemented for child health, there must exist convincing evidence that it results in no harm or that benefits can be confidently expected to outweigh harm, notwithstanding any benefits that it can bring to the children. Evidence-based health informatics (EBHI) supports the use of concrete scientific evidence in decision-making regarding the implementation of technological healthcare systems20.

In embryology, the process of in-vitro fertilization involves selecting the best embryo for transfer. Ethical principles guide the selection of one embryo over another. The ‘best’ embryo has the highest potential to result in a viable pregnancy, whilst preventing the birth of children with conditions that would shorten their lifespan or significantly decrease their quality of life9. AI has been used to rank embryos using images and time-lapsed videos as input21. AI has also been used in pre-implantation genetic screening of embryos non-invasively without the need for an embryo biopsy22. In 2019, scientists in a fertility clinic in Australia developed a non-invasive test (that did not use AI) for preimplantation genetic screening of embryos23,24, and introduced it prematurely for clinical use9. There was a marked discrepancy in results between validation studies and real-world clinical experience25. Importantly and significantly, embryos erroneously deemed genetically abnormal by the novel test and unsuitable for transfer appear to have been discarded26, resulting in a class action suit in Australia27. Although the non-invasive test above did not utilize AI, it nevertheless serves as a cautionary tale. Experts have argued that prioritizing embryos for transfer using novel technologies, such as AI, is acceptable9, but discarding embryos based on unproven advances is not9; thereby emphasizing the need for caution and a balanced approach to ensure that the benefits of novel technologies outweigh any potential harm.

AI might deepen existing moral controversies. For example, coupled with whole genome or exome sequencing, AI could facilitate massive genomic examination of embryos for novel disorders, dispositions or polygenic risk of disease or non-disease traits (such as intelligence). This would move beyond targeted preimplantation genetic diagnosis to massive prenatal “screening” raising significant ethical issues, even facilitating polygenic editing.

AI systems used in healthcare are often designed to include a “human-in-the-loop”. The prediction made by the AI system is checked by a human expert, such that the AI augments but does not automate decision-making. The knowledge, skills, experience and judgment of the healthcare professional are important in case- contextualization, as no case is “standard” and each child comes with his own unique medical, family and social history. Although having a human in the loop decreases the risk of an AI causing harm, there is a risk of introducing human bias and decreasing justice and fairness. AI-enabled decisions are more objective and reproducible unless the source training data was biased or derived from a disparate population from which it is being used.

AI systems that are used outside of healthcare settings can also have an impact on children’s health. Social media and streaming platforms are changing how children interact with content. With touchscreen technology and intuitive user interfaces, even very young children can access these applications with ease28. AI recommendation algorithms are optimized to keep children engaged on the platform for extended periods rather than to prioritize content quality29. There have been multiple studies that have highlighted the adverse effect of prolonged screen time on the cognitive development and neurobehavioral development of children30,31, and on the development of obesity32, and its related complications. Excessive screen time is positively associated with behavioral and conduct problems, developmental delay, speech disorder, learning disability, autism spectrum disorders and attention deficit hyperactivity disorder, especially for preschoolers and boys, and the dose-response relationships are significant30.

Beneficence

Beneficence or promoting good can be seen as benefiting an individual or a group of persons collectively18. AI must benefit all children, including children from different ages, ethnicities, geographical regions and socioeconomic conditions. These include the most marginalized children and children from minority groups.

In healthcare, AI has demonstrated its ability to benefit the care of sick children in out-patient33,34 and in-patient care35. In genomics, AI has been used in both prenatal and pediatric settings. AI can use genotypes to predict phenotypes (genotype-to-phenotype) and can also use phenotypes to predict genotypes (phenotype-to-genotype). Identifai Genetics can determine in the first trimester of pregnancy whether there is a higher chance a baby will be born with any genetic disorder, using cell-free fetal DNA circulating in the maternal blood33, allowing in-utero treatment of some genetic diseases. Face2Gene uses deep learning and computer vision to convert patient images into de-identified mathematical facial descriptors36,37. The patient’s facial descriptors are compared to syndrome gestalts to quantify similarity (gestalt scores) to generate a prioritized list of syndromic diagnosis36,37. Face2Gene supports over 7000 genetic disorders34, and is routinely used in clinical practice by geneticists.

An AI platform combining genomic sequencing with automated phenotyping using natural language processing prospectively diagnosed three critically ill infants in intensive care with a mean time saving of 22 h, and the early diagnosis impacted treatment in each case35. In these time-critical scenarios, rapid diagnosis by AI can have a meaningful impact to improve clinical outcomes for these seriously ill children with rare genetic diseases. It also allows transfer to palliative care and avoidance of invasive procedures for diagnoses that are incompatible with life.

Autonomy

Autonomy can be viewed as positive freedom or negative freedom18. Positive freedom is seen as the ability for self-determination38, whereas negative freedom is the ability to be free from interference, such as from technological experimentation39 or surveillance40.

Unlike adults, who are able to consent, a parent or legal guardian must provide consent for the collection of a child’s medical data or the use of an AI-enabled device in a child. Decisionally competent adolescents have developing autonomy, and their consent should be sought, as well as that of parents. Gillick competence can be applied when determining whether a child under 16 is competent to consent41,42. Gillick competence is dependent on the child’s maturity and intelligence, and higher levels of competence are required for more complicated decisions. Consent obtained from a Gillick-competent child cannot be overruled by the child’s parents. However, when a Gillick competent child refuses consent, the consent can be obtained from the child’s parent or guardian. In accordance with the United Nations Convention on the Rights of the Child, every child has the right to be informed and to express their views freely regarding matters relevant to them, and these views should be considered in accordance with the child’s maturity43. Although a younger child is not legally able to give consent, the child has the freedom to assent or dissent after being informed in age-appropriate language44.

The use of AI in pediatric care should not infringe the child’s right to an open future45. This can occur through infringements of confidentiality and privacy, or generally if decisions are made on the basis of AI which unreasonably narrows the child’s future options.

Justice and transferability

Justice is defined as fairness in terms of access to AI18,19, data18, and the benefits of AI18,46; and the prevention of bias18,19, and discrimination18,19. Justice encompasses equity for all, including vulnerable groups such as minority groups, mothers-to-be and children. AI must benefit all children, including children from different ages, ethnicities, geographical regions and socioeconomic conditions.

Underprivileged communities, including their children, are similarly disadvantaged in the digital world47. Technology (including AI) may increase inequality in under-resourced, less-connected communities48 due to limited access to technology and lower digital literacy. This impacts the ability of the healthcare teams in these communities to leverage on AI in both adult and pediatric medicine. Moreover, machine learning algorithms trained on pediatric data from developed countries may not be applicable to children in less developed countries, resulting in incorrect predictions. These AI applications that were trained on non-representative populations can potentially perpetuate rather than reduce bias49. AI systems risk compromising children’s right to equitable access to the highest attainable standard of healthcare43.

However, AI can also promote equality by connecting under-developed communities to developed communities. The Pediatric Moonshot project was launched in 2020 in an effort to reduce healthcare inequity, lower cost and improve outcomes for children globally50. The Pediatric Moonshot project aims to link all the children’s hospitals in the world on the cloud by creating privacy-preserving real-time AI applications based on access to data. Edge zones have been deployed in 3 continents (North America, South America, and Europe). There is a shortage of specialist pediatricians in underdeveloped countries, and the Pediatric Moonshot project includes Mercury, a global image-sharing network to allow non-children’s hospitals or clinics to share images with pediatricians in children’s hospitals for expert opinion. The Pediatric Moonshot project also includes Gemini, an AI research lab for children, designed to pioneer privacy-preserving, de-centralized training of AI applications in child health that can also be deployed on mobile devices for use by doctors serving under-privileged communities.

Algorithmic bias is the systemic under or over-prediction of probabilities for a specific population, such as children. Fairness (unbiasedness) is multifaceted, has many different definitions and can be measured by various metrics51. Fairness metrics used for AI models in healthcare include well-calibration, balance for positive class, and balance for negative class. It is important to note that these 3 conditions for fairness cannot typically be achieved at the same time by an AI model, except under very specific conditions52. Hence, there is no universal one-size-fits-all definition of fairness, and some definitions are incompatible with others. The appropriate definition and metric of fairness used largely depends on the healthcare context.

Al-enabled devices that were trained on adult data only may underperform when used in children. Several studies have investigated the use of adult AI in pediatric patients and results have highlighted difficulties in generalizing AI across the age spectrum53,54,55,56,57. For example, AI developed to detect vertebral fractures in adults was unreliable in children with a low sensitivity of 36% for the detection of mild vertebral fractures54. A deep learning algorithm, EchoNet-Peds, that was trained on pediatric echocardiograms performed significantly better to estimate ejection fraction than an adult model applied to the same data55. As pediatric care is commonly undertaken in facilities that manage both adults and children, AI-enabled devices not evaluated in children could unwittingly be used by healthcare providers on children, resulting in adverse outcomes. Thus far, most AI-driven radiology solutions have been designed for adult patients. Of late, radiology imaging advocacy groups have appealed to the US Congress to create policies that address the lack of AI-based innovations tailored specifically for pediatric care58.

As such, it is important to consider the transferability of AI systems to the context of pediatric healthcare. Transferability is a measure for how effective a health intervention, initially evaluated and validated in one context, can be applied to another59. AI models are prone to systemic bias arising from the training data, which limits the range of application. Even if the training data originates from a diverse population, the differences in quantity can greatly skew outputs. Children from diverse backgrounds may experience vastly different health challenges, which can be due to factors such as demographic characteristics, upbringing, culture, access to healthcare services, and their surrounding environment. Failure to account for these differences could lead to bias and disparities in the quality of care, disproportionately affecting vulnerable children.

Transparency and explainability

Transparency includes both technological transparency and organizational transparency. Technological transparency refers to the communication and disclosure to stakeholders of the use of AI18,19, including to the healthcare team, pediatric patients, and their parents, or guardians. Parents value transparency, and disclosure pathways should be developed to support this expectation60. Transparency also refers to efforts to increase explainability and interpretability of AI-enabled devices18.

Organizational transparency refers to the disclosure to patients and parents of conflicts of interest. It is not uncommon for AI-enabled mobile health applications to have both a diagnostic and a therapeutic arm, wherein a diagnosis made is followed by a redirection of the user to an e-commerce platform with therapeutic products, such as in esthetic medicine websites that are also used by adolescents. Appropriate disclosure of any conflicts of interest between the developer of the AI diagnostic app and the manufacturer of the recommended therapeutic products is frequently absent.

Transparency is seen as a key enabler of the various ethical principles. Only with transparency and understanding, can there be nonmaleficence, autonomy18, and trust46,61,62,63.

Privacy

Privacy relates to the need for data protection and data security18. While privacy is a right for all children as per the UN Convention on the Rights of the Child43, there is marked variability in adolescent privacy laws not only between countries, but also between states within the same country for consent and privacy regarding substance abuse, mental health, contraception, human immunodeficiency virus infection, and other sexually transmitted infections64. This creates challenges for AI developers looking to build AI systems for the above health conditions for older children.

Fitness trackers and wearables, and digital health apps such as menstruation tracking, sleep tracking, and mental health apps, are popular among adolescents65. These commercial apps collect sensitive data, including real-time geolocation data and reported or inferred emotional states65. As mobile phone apps collect a large amount of identifying data, it is almost impossible to de-identify data in order to protect privacy66. Stigma and discrimination can result from leakage of sensitive health data, and while this negatively affects patients of all ages, the vulnerability and young age of children means that any inadvertent disclosure of such data would have longer-lasting effects in children65.

De-identified data is typically used to train AI systems. However, there is a real possibility of de-identified pediatric data to be re-identified, particularly for children with rare genetic diseases, thereby resulting in an infringement of privacy and possible harm. Larger datasets, which include data from pediatric patients, are needed for the unbiased training of AI-enabled devices used by children.

Unfortunately, this may result in not only the loss of autonomy, but also the possibility of re-identification and loss of privacy for certain children and their families.

Dependability

Dependability refers to the need for AI systems to be robust, secure and resilient, where in the event of a malfunction, the system must ensure that it does not put the patient or the clinical setting in an unsafe state7. This principle is especially important for pediatric patients, as they may be less capable of voicing concerns or understanding risks and less likely to be aware when an adverse event has occurred compared to adults. Without proper supervision, such malfunctions can be catastrophic.

Auditability

Auditability is the requirement for any capable AI system to document its decision-making process via an “audit trail” which captures input and output values as well as changes in performances and model states7. This is a layer of transparency that is critical for understanding how the model functions and evolves over time. In pediatric care, this allows clinicians to ensure that recommendations made by an AI-enabled system align with the needs of children and identify any systemic error that may disproportionately affect them. The audit log is also important for clinicians to evaluate changes within the system over time. For medical-legal purposes, the audit trail for AI in pediatric patients may need to be retained until the age of maturity (18 years) plus an additional 3 years (21 years)7.

Knowledge management

Children’s health can be significantly impacted by a wide range of factors, from genetic to environmental. In the present day, these factors can fluctuate widely within short periods of time, and vary among children. AI models for pediatric healthcare, as a result, may become outdated and less effective as time goes on.

Accountability

Accountability is the requirement for organizations responsible for creating, deploying and maintaining the AI system to actively supervise its usage and address any concerns raised7. As we have mentioned above, children represent a specially vulnerable population who may be unaware of the potential risks from AI systems. It is then up to parents and clinicians to voice concerns regarding the safety of the child. Accountability ensures that any potential failures in AI systems do not disproportionately burden individual clinicians but are addressed in a way that protects both healthcare providers and the children under their care.

Accountability also encompasses professional liability. The clinician in charge of the patient is potentially liable for any harm from use of the AI-enabled system on his pediatric patients, and his professional license is at risk. In future, the clinician could also be held accountable for his or her failure to utilize AI-enabled systems on his patients if this becomes the standard of care.

Trust

Trust refers to trustworthy AI and is a byproduct of the above ethical principles. It is generally recognized that trust is needed for AI adoption and for AI to fulfill its potential for good. Conversely, it can be argued that trust is the one ethical principle in which we should not have 100% of, in that we should never place complete trust in an AI-enabled medical device.

link